User-centered design systems that stand the test of time, require a level of research and testing to inform and validate ideas. As pressure to create leaner timelines mounts, how do we continue to deliver great work that requires our thoughtful due diligence―in particular, listening to user feedback?

Here are a few ways our design team has integrated testing into our design process without disrupting the overall project pace, while still receiving valuable feedback and instilling client confidence for a successful launch.

Conduct fewer tests, one is better than none

One way to incorporate usability testing into a tight timeline is to reduce the total number of tests per study. A study is a group of tests. Behind each study, there is a driving question for conducting the test (e.g. Will new students be able to register for new classes? Is the account creation process user-friendly?). It’s easy to think that we need a vast pool of tests in order to merit testing at all. This all-or-none mentality often leaves designers on tight timelines skipping the process altogether.

As a response to shorter project schedules, our design team has simplified our expectations around testing. Every individual user test has valuable feedback that can help improve the design. If one person that clearly matches our primary audience segment is having trouble with the search functionality, we take time to improve the design before spending time on a second test. And if a second test isn't a luxury we can afford, that single test held its value.

Jakob Nielson explains why you only need to test with five users per study in order to accurately reflect a large user base. He goes on to explain that as few as two users per study can still be valuable. We’ve found this rule to hold true. Even one test will likely improve the design process over skipping the testing experience altogether. If you can afford up to 5 tests, great! It’s likely that the bulk of your time will be spent getting set up for one test that will be replicated across users. Adding a handful more is sometimes not a big deal.

"For really low-overhead projects, it's often optimal to test as few as two users per study." - Jakob Nielsen, Ph.D.

Keep in mind that most of our usability tests at Lullabot are 30-minute video calls where we ask the user to complete a task, while we’re also gathering qualitative data. Nielson argues that qualitative interviews should be the majority of your testing style for highest quality feedback.

As we work to reduce the number of tests per study, we can reduce the total number of tests for the entire project. The success of this approach hinges on leveraging priority. Knowing when a test is needed is a call we make throughout the process as questions arise. Areas of the site that are designated as a higher priority during the initial design research process, naturally receive special attention. So go ahead, book fewer user tests and feel confident that you’re adding value, while also enjoying the time-saving benefits.

Iterate between individual tests

With each individual test, we learn a great deal and will likely have a key insight for design improvement. Consider iterating on the design immediately following each test. Once the design has improved, schedule another test if needed and repeat the process. By iterating between each test, we can focus on what was learned from each individual user, and truly take their feedback into consideration with a thoughtful improvement.

Another benefit of iterating between tests is that we’re able to create space in our day for continuing to produce design work. If we schedule multiple tests in one day, that day of productivity will be lost. Whereas scheduling one test in a day keeps the project momentum moving forward quickly without an interruption for usability testing.

Save time with sketch testing early in the process

Usability tests can be conducted with a variety of asset types. Examples of testable assets including:

- Paper sketch

- Singular digital wireframe page or component

- Digital wireframe prototype (no styles, multiple wireframes simulate interactivity or a multi-step experience)

- High-fidelity digital prototype (styles added, simulates interactivity or a multi-step experience)

- Singular high-fidelity page or component

- HTML prototypes (wireframe or high-fidelity)

- Existing sites

- Navigational Tree

The best asset type for use in a test depends on the nature of the question you have. For example, testing the user-friendliness of a navigation interaction may prove ineffective with an asset as low fidelity as a paper sketch. Though testing the effectiveness of a headline or a certain order of content on the page would be great examples of ways to use paper sketches.

Leaning on paper sketches early helps to keep testing fast and light. The disposable nature encourages iteration and experimentation. There is an inclusive quality of paper, it invites participation from all levels of technical backgrounds.

In addition to testing paper sketches, also consider tree testing as a way to quickly test things like navigation. No prototype, wireframe or design comp is needed to complete this test.

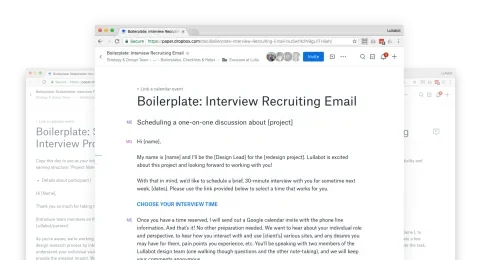

Save time with boilerplate templates

Over the last few years, our design team has worked to compile templates and boilerplates for design processes wherever possible. When we need to conduct usability testing on a tight timeline, these starter documents can be especially helpful. For example, we have an email template ready to go for recruiting users and an interview script that reminds us to ask permission to record or remember to pull together highlights before closing the tab. These tools are simple, yet each little line item that we don’t have to reinvent adds up over the course of a project. We like to use Dropbox Paper Docs as a simple tool for text-based boilerplates. In fact, we use it for just about everything.

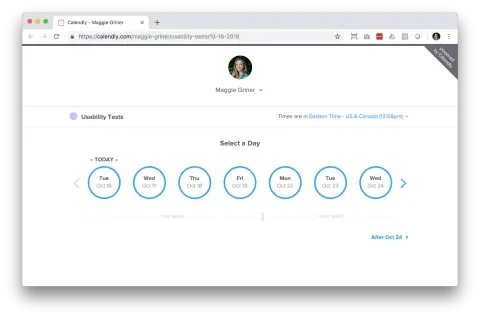

Lean on scheduling tools that integrate with your calendar

Scheduling is a sneaky task that can easily add many hours to your usability testing timeline. Using email can quickly become a time leak, as cancellations and rescheduling become a manual process. Calendly is a tool we often use for scheduling. With a free account, you can add available times and simply send the link to choose a time to your users. You can even integrate Calendly with your Google Calendar so that the process is completely automated, all you need to do to is keep an eye on your calendar and show up to the interview.

Other tools similar to Calendly

Ask for help from your client to recruit and schedule testers

Users are more likely to engage in a user test if they are being recruited by a friend or colleague. We have made it a habit to ask for help from clients in recruiting their users for testing. This helps users feel more comfortable being recruited by a familiar entity, and it allows our design team more time to focus on design work and creating the tests, rather than email correspondence. We provide the client with an email template that they can customize with their own voice, and a Calendly link to include in the email which will allow the user to schedule their own interview.

Also, consider services like usertesting.com where you can gain access to a community that will participate in tests. This approach could potentially save a lot of time, especially if you can match people to your target audiences easily. There are many community testing sites where you can choose characteristics for the type of user you’d like to review the work.

Summary

Go forth, book fewer tests, ask for help from your clients, lean on new design tools, test early with sketches and create time-saving boilerplates. Most importantly, continue creating thoughtful, research-informed design systems.