Two Truths About Estimation

The first truth about large software projects is that it's nearly impossible to provide an accurate estimate at the outset. A modern web site is a collection of small systems interacting to form a much larger system—one that is about as predictable as the weather, or the stock market. In scientific terms, an enterprise-scale Drupal website is "fundamentally complex," and fundamentally complex systems tend to defy prediction.

The second truth about large software projects is that clients almost invariably require estimates, whether it's a fixed bid or a "back of the envelope" guesstimate they can take to their superiors to get an Agile project the green light. Because we love our clients, and very few of them live in a world of limitless budgets and flexible requirements, estimates are a necessary evil. So what's the aspiring estimator to do? In this article, I'll talk about how Lullabot uses a variation on the "Wideband Delphi method," a consensus-based estimation technique developed at RAND Corp. in the 1940s. Don't worry! Our approach is not as fancy as it sounds.

Decomposing the Project

Before you can estimate, you have to break a project down into estimable pieces. In theory, this is simple enough. To quote my college Computer Science text book, "Algorithms for solving a problem can be developed by stating the problem and then subdividing the problem into major subtasks. Each subtask can then be subdivided into smaller tasks. This process is repeated until each remaining task is one that is easily solved." Great, you might think. That sounds easy! I have time to catch up on my correspondence moves at chessatwork.com. In reality, it's always difficult to come up with a Work Breakdown Structure (W.B.S.), the fancy term for a long and comprehensive list of the small, estimable tasks required to complete a mongongous project. There are many different approaches to decomposing a project; exploring them all in detail is out of scope for this article, but I'll briefly gloss over a few of the most popular. If the requirements are unknown they must be elicited, which is a fancy way of saying that it's time to sit down in a room with your stakeholders for three days and ask a bazillion questions. I like to pretend I’m a detective doing a murder investigation, leaving no stone un-turned. Usually Lullabot will do a one-week onsite, and then go back to the basement to write a "Vision and Scope" or "Software Requirements Specification" document, which breaks the project into 10-20 major features with detailed descriptions around the requirements and assumptions about each one. These features can then be broken down into smaller and smaller problem sets. We'll then describe how Drupal typically solves those problems. When we're working with a client accustomed to Agile/Scrum, we may not need to do an estimate, but we'll still develop a project backlog expressed first as epics and then broken down into user stories, and the user stories become the estimable elements, as well as the basis for recommending Drupal solutions.

Our Approach to Project Decomposition

Oftentimes, however, there isn't a discovery budget, you can't sell Agile, or you're working off a RFP. In these scenarios, I would advise breaking the project down into existing Drupal solutions. What are the content types, the views, the taxonomy requirements, the menus, the blocks, etc.? Will you require Panels or Context modules for help with blocks or layout? Knowing the tool ahead of time is a huge advantage when it comes to making estimates. When you've already used solutions like Panels, or Services, or SOLR, or Migrate, or Features to solve problems in the past, there are fewer unknowns, and you can estimate off of past experience. Lullabot has a template we like to use that helps us think about large enterprise-level websites in terms of their constituent Drupal building blocks. Don't forget about non-functional requirements, and stuff that's typically not visible to users, but is still important such as deployment processes, hosting requirements, etc. Whichever method you use to decompose the project, at the end of the day you'll likely end up with a spreadsheet, which is still the best tool I've found for estimation. I've seen everything from OmniOutliner to a combination of playing cards, tequila shots, and a whiteboard used to capture estimates, but I think spreadsheets are a sober choice. This spreadsheet represents your best guess at what work will be involved in completing the project.

Here's a link to our base Google Spreadsheet. To learn more about how to use it, read this fantastic follow-up to this piece.

A Typical Work Breakdown Structure

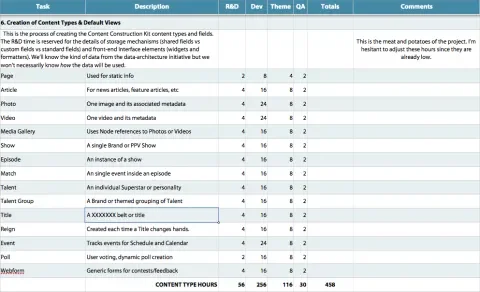

Here's a spreadsheet showing how we typically organize our Work Breakdown Structure

Notice how we have columns for R&D, development, theming, and quality assurance for each item. We treat project management hours separately as overhead across the whole project and, depending on the type of project, the client's internal project management resources and the culture of the client, historically we’ve found 20 and 35 percent of the total budget to be the common range for project management. The higher investment of time is reserved for clients who have not been previously involved in an enterprise CMS deployment before.

It's also sometimes useful to organize the W.B.S. into sprints—chunks of work no more than a month long. The benefit of this exercise is that it helps you start to get a handle on timeline, and forces you to think about the priority and sequence of the tasks. For every four sprints, consider building in a slush sprint with no explicit goals, other than to allow for the inevitable delays of a project, the squashing of pernicious bugs from past sprints, and for change orders. Most change control processes in contracts allow for the theoretical possibility of a change order occurring, but almost never set aside time in the timeline to actually deal with such a change.

How Much is this Bad Boy Gonna Cost?

Now it's time to get down to the nifty business of estimating. The first step is to decide on a unit of estimation. At Lullabot, we use ideal programmer hours, meaning how many hours would this take if the programmer was left alone in distraction-free, Red—Bull-fueled bliss to crank out code, write Selenium tests, or theme the item. We are flexible with increments, meaning we make our best guess. The truth is, though, that the larger the estimate the higher the probability of inaccuracy. For this reason, it's often useful to confine the increments of your estimates to something like: 15 minutes, 1, 2, 3, 4, 8, 12, 16, 24, 32, or 40 hours. It's silly to argue about whether a task will take 30 or 32 hours, because, well…hard saying, not knowing. My belief is that tasks likely to take more than one developer week to complete should be broken down further, and the sub-tasks revisited. If you've done your job on the W.B.S., most of the tasks should come in on the lower end of the spectrum, where you have the luxury of greater precision.

Next, select two or more engineers from your team, including all who were involved in decomposing the project into tasks. Distribute an estimate-free copy of the W.B.S. spreadsheet to each estimator, and ask them to go through and provide estimates for each item, while simultaneously documenting their assumptions in the "Comments" column of the spreadsheet. Documenting assumptions is critical for consensus, and a must for good contracts. If these assumptions are documented, and subsequently encompassed in the contract, then it is easy, and contractually possible, to make a case to the client that the original estimate should be tossed out, and the bid revised.

Now the inestimable joy of estimation really begins. First, it's helpful to have a disinterested project manager play moderator. Ideally this person hasn't estimated the tasks, and therefore has no preconceived notions. The moderator gathers everyone on Skype, or a conference line or even—gasp—an actual, physical room, and shares an estimate-free copy of the spreadsheet as a Google Doc. Now it's time for everyone to reveal their estimates, and any assumptions that went into that estimate. For many tasks, the estimates will be really close, and the need to make assumptions negligible. In these instances, it is best for the project manager or moderator to simply post an average to the master spreadsheet. If, however, the estimates diverge wildly, it's time to discuss assumptions. One developer might have assumed a few drush commands would be sufficient to administer a custom module, where another developer might have assumed a full-featured UX. Once everyone agrees on the assumptions, and the project manager has them documented, it's time for the engineers to re-estimate. Hopefully the results are closer. Sometimes, the estimators after exchanging assumptions will actually swap positions as high and low. The delta to each estimators original bid puts the ‘Delphi’ in Wideband Delphi estimation.

The key here is to make sure the conversational tone is loose and friendly, and to continue the cycle of estimate, discuss, estimate, discuss. The goal is consensus on a best-guess estimate, and ensuring that the group's assumptions are documented. If you haven't reached consensus after three iterations of estimation, it's probably because, at heart, the estimators don't agree on the assumptions. This is invariably due to either too many unknowns or programmer hubris (“That won’t take 12 hours, it’s just an alter hook!”). At this point, it is best to move on, highlight the contentious column in Yellow, and assign someone to start to eliminate the unknowns. If, due to timeline, you only have one shot at producing a final estimate, consider presenting the client with a range, along with the assumptions that would affect that range one way or the other. There may be items you simply won't bid. Consider adding a time and materials clause to the contract to cover risky, inestimable pieces of work that defy prediction. (See migration, data.) At the end of this process, you will hopefully have a comprehensive estimate that can be attached to the Statement of Work, providing a detailed, and, hopefully, accurate prediction of the number of hours required to complete the project.

Extra Credit

To introduce even more fun into the process, and to make sure the estimates are blind, consider using Planning Poker, a great virtual estimation game that has the added benefit of including a two-minute timer for each round of discussion.

The Blameless Autopsy

Find a way to log time (we happily use http://www.letsfreckle.com) on projects, and consider logging time at least to sprint-level granularity so that you can go back and perform a blameless autopsy on your estimates. Now ask what, if anything, went wrong, why did 'Sprint 6: Data Migration' take 47x as long as predicted? Note the type of Sprints that seem to come up again, and again as culprits in overruns, and adjust your future estimates accordingly. If you're really meticulous, consider entering your W.B.S. directly into a project management system as tasks. We use Jira for this. After the project is completed, go back and review the actuals against the estimates and see what happened. You'll learn a lot, and this historical data should help guide the way on future estimates.

In Conclusion

Human beings, Nostradamus aside, are bad at predicting the future. Using a consensus-based approach that depends on the blind estimates of multiple engineers, and combining that with iterative refinement, can go a long way in mitigating the uncertainty.