This year, MozCon demonstrated that Google has effectively killed SEO, in the sense of getting more traffic to your site through natural search rankings.

Much of the conference fell back on some simplistic bromides around branding, social media, mobile, and the like, general digital marketing stuff that has been hashed and rehashed before, and in much greater depth. This content might be inspiring. It goes down like cotton candy and gives you a temporary sugar high. But for those who have any experience at all in the digital marketing arena, it just didn’t offer that much substance for you to sink your teeth into.

They also didn’t offer many practical steps to help smaller business owners compete in an increasingly uneven playing field. When a competitor can influence search rankings through a national TV campaign, what do you do? SEO has traditionally helped even the odds in this regard. There was some good, worthwhile content (which I’ll get to in a moment), but very little of it that could be called “SEO” anymore, and the lack of such practical content was noticeable. Based on conversations with attendees who have been coming for years, this was a major shift from previous iterations of the conference. Of course, the changing landscape does provide some interesting opportunities for those who are creative and tenacious enough to take advantage. As Wil Reynolds noted, VRBO was beat in 18 months by Airbnb. Airbnb ranks #9 for vacation rentals. VRBO still ranks #1 for the phrase, and it doesn’t matter.

But of course, killing SEO has been one of Google’s goals for a long time. Google wants you to buy ads. That’s how it gets most of its revenue. As long as there are known ways to raise your rankings in organic results, then your need for ad spend goes down.

There are still ways to increase your rankings and get more traffic naturally. On-site structure and best practices, link building, social signals, and user engagement still have their place. What’s missing is the ability to measure effectiveness. It has become a black box of mystery. Even if you follow Google’s own rules and recommendations 100%, to the letter, there is no guarantee that it will actually help you get more traffic. This adds uncertainty, which leads to paying more for organic rankings and less definable ROI, which leads to the grass over on the paid Google Adwords side looking so much greener.

It starts with the dreaded “not provided” referrer in analytics. Over 70% of all searches are encrypted and are classified as “not provided”, meaning that marketers are blindfolded most of the time when trying to discover how users are reaching their site (unless, of course, they pay for ads. Then the keyword data shows up just fine.)

Add to this to the various search algorithm updates and heavy-handed punishment Google lays down on some online publishers, and you have people scared for their lives, timid to try anything new that may cross the line. Those that have figured something out are not going to share it publicly anytime soon.

Even the best of the talks/topics at MozCon, that had clear examples to back up their assertions, had a large amount of uncertainty baked in. It’s like playing a game that generates random dungeon layouts each time. Everything is recognizable, the textures and colors familiar, but you still have to find your own way through the dark maze, and hope you stumble upon the treasure. It has made it much harder for the little guy to compete with big brands. And in many cases, it has sites competing against the Google giant itself for real estate in the search results.

There are no organic listings above the fold. Zero. This query shows nearly all ads.

So what can we do to increase visibility of our web real estate? There are four broad topics that were worthwhile and were covered in various talks, with some highlights and my personal takeaways. Part one will cover the first key topic: the resurgence of on-site SEO.

Onsite SEO

Onsite SEO used to be the only SEO. Your content structure and keywords. What you said about yourself is how your rankings were determined. Google came along and said that what other people said about you is more important, and backlinks and their anchor text were a key factor. With the work of Google’s search spam team and other initiatives like machine learning, the landscape has changed and morphed, and it looks like we are coming full circle.

We are now seeing a renaissance in the importance of onsite optimization, for two main reasons.

Reason 1: Google’s Knowledge Graph

Pete Meyers talked about disappearing organic rankings, with ads, shopping results, local listings, videos, and other Google properties taking up more and more space. Google does not want you to leave their ecosystem. You can see this further when Google just provides a direct answer to your question, solving your need immediately.

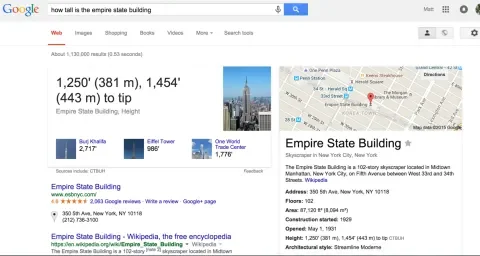

This is a great user experience; it saves the user clicks and time. Google’s Knowledge Graph powers these answers, and you see it in more detailed action if you type in a query that generates a knowledge card. Meyers used the space needle as an example, but for this article we’ll use the Empire State Building.

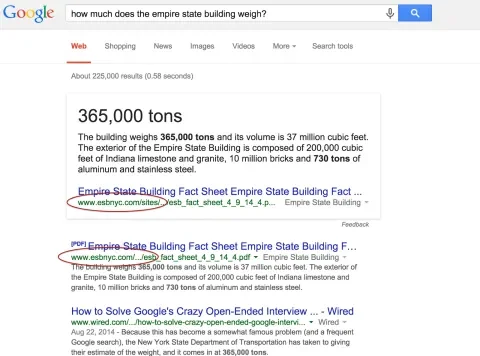

The data is structured, and you can play around. The answer it spit out is listed as the “Height.” We can play some quick Jeopardy to see if we can get it to return any other parts of that knowledge, and we find that it does this really well.

The problem is that it is editorially controlled, so it doesn’t scale. What happens when there is nothing to return from the structured data? We get a rich snippet that looks like a direct answer, but the text is actually pulled from the first ranking result.

But these rich snippets don’t always pull from the first organic ranking. Ranking does matter...but all you have to do is get on the first page of the search results. After that, Google determines from that limited list what content to feature. How does it determine which one to use? Based on the on-page semantics of the page. Your content can directly shape the results of the SERPs (search engine results page). And once your content is featured in the rich snippet, you effectively double your search real estate, and some have seen significant CTR increases for those queries.

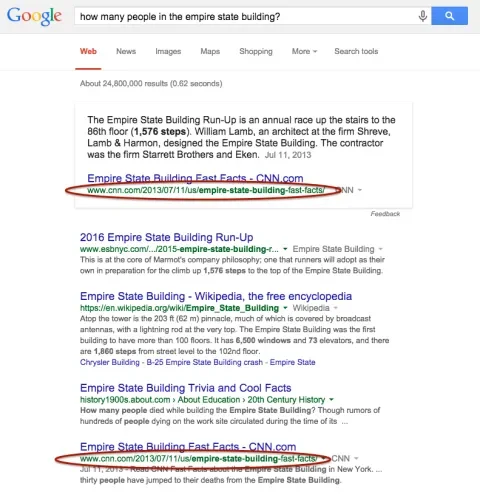

Another example. In the image below, you’ll see CNN has claimed the rich snippet, even though they are fourth result.

What’s interesting is that mobile seems to be the driver of these rich snippets (and a lot of the other redesigns Google has been implementing). Do the same query on a smaller device, and the rich snippet fills up the space above the fold. There are also some hints that voice is driving these changes. When you ask a question, what does Google Now (or Siri) tell you? They need structured data to pull from, and in its absence, they need to fill in the gaps to help with scale.

Reason 2: Machine Learning and Semantics

Rand Fishkin’s talk on on-site SEO went through the evolution of Google’s search engine, one that had a bias against machine learning, and was dependent on a manually crafted algorithm. This has changed. Google now relies heavily on machine learning, where algorithms don’t just know the manually inputted factors for ranking, they come up with their own rules for what makes a good results page for a given query. The machine doesn’t have to be told what a cat is, for example. It can learn on its own, without human intervention.

This means that not even Google’s engineers know why a certain ranking decision has been made. They can get hints and clues, and the system can give them weights and data. But for the most part, the system is a black box. If Google doesn’t know, we certainly can’t know. We can continue to optimize for classic ranking inputs like backlinks, content, page load speed, etc. However, their effectiveness will continue to get harder and harder to measure. If we want to prepare better for the future, we’ll have to focus more on, and get better at, optimizing for user outputs. Did the searcher succeed in their task, and what signals that? CTR? Bounce rate? Return visits? Length and depth of visit?

Based on some experiments that have been run, the industry knows that Google takes into account relative CTR and engagement. If users land on your result and spend more time on your site versus other results for the same query, you have a vote in your favor. If users click the back button quickly after visiting your page, that doesn’t bode well for your rankings.

Rand offers five ways to start optimizing for this brave new world:

- Get above your rankings average CTR. Optimize title, description, and URL for click-throughs instead of keywords. Entice the click, but make sure you deliver with the content behind the headline This is an age where even the URL slug might need a copywriter’s touch. And since Google often tests new results, it could be worth it to publish additional unique content around the same topic, to get it just right.

- Have higher engagement than your neighbors for the same queries. Keep them on the page longer, make it easier for them to go deeper into your content and site, load your site fast, invest in fantastic UX, and don’t annoy your visitors. Offering some type of interaction is a good idea. Make it really hard for them to click that back button.

- Fill gaps in knowledge. Google can tell what phrases and topics are semantically related for a given space, and can use criteria to determine if a page will actually fulfill a user’s need. For example, if your page is about New York, but you never mention Brooklyn or Long Island, your page probably isn’t comprehensive. Likewise, articles on natural language processing are probably going to include phrases like “tokenization” and “parsing.”

- Earn more social shares, backlinks, and loyalty per visit. Google doesn’t just take shares, alone on an island, as a ranking factor. Raw shares and links don’t mean as much. If you earn them faster than your competition however, that’s a stronger signal. Your visits to shares ratio needs to be better. You should also aim to get more returning visitors over time.

- Fulfilling the searcher’s task, not just their query. If a large number of searchers keep ending up at a certain location, Google wants to get them to that destination faster without them having to go through a meandering path. If people searching for “best dog food” eventually stop their searching after they end up at Origen’s website, then Google might use that data to rank Origen higher for those queries. Based on Chrome’s privacy policy, they are definitely getting and storing this data. Likewise, some queries are more transactional, and results can be modified accordingly. Conversion rate optimization efforts might therefore help with search visibility.

Action Items for Onsite SEO

- What questions are your potential customers asking, questions whose answers can’t be editorially curated? What questions actually motivate some of the keywords you rank for on the first page? What are some gaps in content that are hard to editorially curate? Craft sections of your content on your ranked page so it better answers those questions, and fills in these gaps.

- Build deep content. Add value and insight, stuff that can’t be replaced by a simple, one-line answer.

- Watch one of Google’s top engineers, Jeff Dean, talk about Deep Learning for building intelligent computer systems.

- Give users a reason and a way to come back to your site. Build loyalty. Email newsletters and social profiles can help. If you know a good content path that builds loyalty in potential customers, consider setting up remarketing campaigns that guide them through this funnel. If they drop off, draw them back in at the appropriate place. This can also be done with an email newsletter series.

- Look for pages that could benefit from some kind of interactivity, adding value to the goal of the page and helping the user fulfill their need. A good example of this is the NY Times page on how family income relates to chances to go to college. Look for future opportunities. Another example is Seer Interactive’s Pinterest guide, where the checklists contain items you can actually…check.

- Ask yourself: can I create content that is 10 times better than anything else listed for this query?

- Matthew Brown, in his talk on insane content, gave several examples of companies that broke the mold on what content can be, raising engagement and building loyalty by shattering expectations. When working on content ideas, be sure and add the presentation as part of the discussions. How could you add some personalization? How can you put the user more in charge of their reading experience? Some examples he showed:

- Coder - Tinder but for code snippets. Swipe left if the code is bad, swipe right if the code is good.

- Krugman Battles the Austerians.

- What is Code? - this particular feature took on a second life when it was put on Github so changes could be recommended. A great way of extending life of an investment. It also adds some personalization in that it shows you how many visits and how long you’ve spent reading.

- Check some of your richer content from the past. Have you created any assets as part of your content that can live on their own? Maybe with just a little improvement?

- Define who a loyal user is. Is it someone who comes to the site twice a month? 5 times a month? Define other metrics of success for a piece of content. To measure it, you need to know what it is.

- Find content that has a high bounce rate and/or low time on page. Why are people leaving? Does it have a poor layout? Hard to read? Takes too long to load?

- Exchange your Google+ social buttons for WhatsApp buttons. This is based on a large pool of aggregate data that Marshall Simmonds and his team have analyzed. Simmonds said CNN saw big gains when they did this.

SEO is an exciting discipline, one that, by necessity, tries to be a synthesis of so many other disciplines. Where else are you going to be talking about content strategy, which then leads into the theories behind deep machine learning, and then onto fostering a better user experience?

In part 2 of the recap, we’ll go over remarketing, building online communities, and digging deeper into your analytics.