In my last article, Indexing Content from Drupal 8 using Elasticsearch, we saw how to configure a Drupal 8 site and an Elasticsearch server so content changes are pushed automatically. In this article, we will discover how to implement a very simple search engine that only requires HTML and JavaScript. It is intentionally as simple as possible so you can grab the key concepts and then adjust it to your project needs.

The demo in action

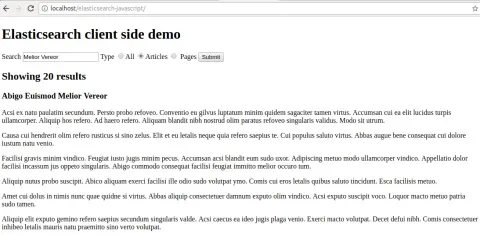

Here is a screenshot of the search demo, whose source code is available at this repository:

The above form contains a text input field that searches for a string among all full-text fields (in our case, the title and the body summary) and a filter by document type (articles or pages). When we click Search, the following query is submitted to Elasticsearch:

{

"size": 20,

"query": {

"bool": {

"must": {

"multi_match": {

"query": "Melior Vereor",

"fields": [

"title^2",

"summary_processed"

]

}

},

"filter": {

"term": {

"type": "article"

}

}

}

}

}In the above query we are searching for the string Melior Vereor at the title and summary_processed fields, with a boost on the title so if the string is found there it shows first in the results. We are also filtering documents by article type.

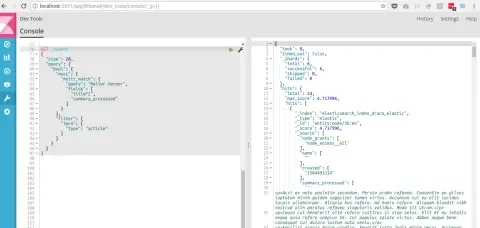

The best way to discover the Query API in Elasticsearch is by installing Kibana, a web UI to browse, analyze, and perform requests. Here is a screenshot taken while I was building the above query before coding it into JavaScript:

Configuring and securing Elasticsearch

While being able to perform client-side requests is great, it requires a few configurations and security settings in order to block access to the Elasticsearch server. Here are the things that you should do so that only the allowed applications can push content (i.e. a Drupal site) while every other application is only permitted to perform search requests.

For additional settings have a look at the Elasticsearch configuration reference, which is organized by module.

Setting up network access and CORS

Elasticsearch binds by default to the local interface, meaning that it can only be reached locally. If we want to allow external access, we need to adjust the following setting at /etc/elasticsearch/elasticsearch.yml:

# Set the bind address to a specific IP (IPv4 or IPv6):#

network.host: [_local_, _eth0_]_local_ is a special keyword that refers to the local host, while _eth0_ refers to the network interface whose identifier is eth0. I figured this out by executing ifconfig at the server where Elasticsearch is installed.

Next, we need to add the CORS settings so client-side applications like the one we saw above can perform requests from the browser. Here is a configuration set where we only allow the HTTP methods for performing search requests which should be appended to /etc/elasticsearch/elasticsearch.yml:

# CORS settings.

http.cors.enabled: true

http.cors.allow-origin: "*"

http.cors.allow-methods : OPTIONS, HEAD, GET, POSTLocking the Elasticsearch server

There are a few ways to configure which applications are allowed to manage an Elasticsearch server:

- Installing and configuring the ReadOnly REST plugin.

- Use a web server that authorizes and proxies requests to Elasticsearch.

- Use a server-side application that acts as middleware, like a Node.js application.

This article covers the first two options, while the third one will be explained in an upcoming article.

Installing and configuring ReadOnly REST plugin

ReadOnly REST makes it easy to define the policy to manage an Elasticsearch server and is the option that we chose for this demo. We started by following the installation instructions from the plugin’s documentation and then we added the following configuration to /etc/elasticsearch/elasticsearch.yml:

readonlyrest:

access_control_rules:

- name: "Accept all requests from localhost and Drupal"

hosts: [127.0.0.1, the.drupal.IP.address]

- name: "Everything else can only query the index."

indices: ["elasticsearch_index_draco_elastic"]

actions: ["indices:data/read/*"]There are two policies above:

- Allow all requests coming from the local machine where Elasticsearch is installed. This allows us to manage indexes via the command line and lets Drupal push content changes to the index that we use in the demo.

- Everything else can only query the index. There we specify the index identifier and what actions other applications can perform. In this particular case, just searching.

With the above setup, applications trying to alter the Elasticsearch server will only be able to do so if they comply with the rules. Here is an example where I attempted to create an index against the Elasticsearch server via the command line:

[juampy@carboncete ~/Dropbox/Projects/Turner]$ curl -i -XPUT 'https://elastic.some.site/foo?pretty'

HTTP/1.1 403 Forbidden

content-type: text/plain; charset=UTF-8

content-length: 0As expected, the ReadOnly REST plugin blocked it.

Using a web server as a proxy to authorize requests

An alternative approach is to put Elasticsearch behind a web server that performs the authorization. If you need further control over the authorization process than what ReadOnly REST plugin provides, then this could be a good option. Here is an example that uses nginx as a proxy.

Go search!

You have now seen how to query Elasticsearch from the browser using just HTML and JavaScript, and how to configure and secure the Elasticsearch server. In the next article, we will take a look at how to use a Node.js application that presents a search form, processes the form submission by querying Elasticsearch, and prints the results on screen.

Acknowledgements

Matt Oliveira for introducing me to Kibana and for his editorial and technical review.