Over the past few months I have been banging my head against a problem at MSNBC: importing the site's extremely large database to my local environment took more than two hours. With a fast internet connection, the database could be downloaded in a matter of minutes, but importing it for testing still took far too long. Ugh!

In this article I'll walk through the troubleshooting process I used to improve things, and the approaches I tried — eventually optimizing the several-hour import to a mere 10-15 minutes.

The starting point

This was the set up when I started working on the problem:

- The development server has a 5GB database with 650 tables with a total of 27,078,694 rows.

- Drush 6.3.0 with sql-sync command adjusted to skip data from unneeded tables.

- My local laptop’s CPU has an Intel Core i7-3630QM @ 2.40GHz and a Solid State Drive.

The output of importing the development environment’s database in my local environment showed how serious the problem was:

juampy@juampy-box: $ time drush -y sql-sync @msnbc.dev @self --create-db

You will destroy data in msnbc and replace with data from someserver/msnbcdev.

Do you really want to continue? (y/n): y

Command dispatch completed. [ok]

real 120m53.545s

Wall-clock time was two hours, and it was causing a lot of frustration within the team. Having such a slow process was a big limitation when someone wanted to peer review pull requests. To avoid the delay, people were only updating their local database once a week instead of every couple days — making regressions much more likely when exporting configuration from the database into code.

Analyzing the database

My first attempt at a solution was reducing the content in the development database. If the size of the database could be reduced to about 1GB, then the import process would be fast enough. Therefore, I inspected which tables were the biggest and started analyzing what I could trim:

mysql> SELECT TABLE_NAME, table_rows, data_length, index_length,

round(((data_length + index_length) / 1024 / 1024),2) "Size in MB"

FROM information_schema.TABLES

WHERE table_schema = "msnbc"

ORDER BY round(((data_length + index_length) / 1024 / 1024),2) DESC

LIMIT 0,15;

+---------------------------------------------+------------+-------------+--------------+------------+

| TABLE_NAME | table_rows | data_length | index_length | Size in MB |

+---------------------------------------------+------------+-------------+--------------+------------+

| field_revision_body | 362040 | 1453326336 | 104611840 | 1485.77 |

| field_revision_field_theplatform_transcript | 45917 | 1241251840 | 49938432 | 1231.38 |

| field_revision_field_homepage_item_text | 1338743 | 436125696 | 580747264 | 969.77 |

| field_revision_field_homepage_item_headline | 1254389 | 264192000 | 641843200 | 864.06 |

| field_revision_field_homepage_link | 947927 | 333365248 | 512671744 | 806.84 |

| field_revision_field_homepage_reference | 1335704 | 177143808 | 629227520 | 769.02 |

| field_revision_field_homepage_item_template | 1243619 | 177176576 | 598425600 | 739.67 |

| field_revision_field_issues | 757708 | 90931200 | 295092224 | 368.14 |

| field_revision_field_homepage_item_image | 584289 | 75218944 | 263585792 | 323.11 |

| field_revision_field_homepage_items | 1041752 | 72515584 | 239206400 | 297.28 |

| metatag | 241023 | 311394304 | 0 | 296.97 |

| field_revision_field_short_summary | 467445 | 144556032 | 162529280 | 292.86 |

| field_revision_field_original_topics | 387105 | 82477056 | 189874176 | 259.73 |

| field_data_body | 91027 | 254656512 | 15171584 | 257.33 |

| field_data_field_theplatform_transcript | 18638 | 241745920 | 11911168 | 241.91 |

+---------------------------------------------+------------+-------------+--------------+------------+

15 rows in set (2.52 sec)

What did I learn from that data?

field_body_revisionwas the biggest table in the database. There were many revisions, and many of them had a considerable amount of HTML. I thought about simply trimming this table and field_body, but doing it without breaking HTML would be tricky.field_revision_field_theplatform_transcriptwas the second biggest table. I looked at the source code to see how it was being used and asked the development team if it was needed for development and found out that I could trim this value without damaging the development experience. An easy win!- Fields used on the homepage had tons of revisions. One reason was heavy use of the Field Collection module on a multi-value Entity Reference field. Each node save created a cascade of new revisions that were good candidates for removal.

Trimming long tables

All of this was promising, and I set up a standard process for slimming down our development databases. Every night, the production database is copied into the development environment. MSNBC is hosted at Acquia; which offers a set of hooks to operate on environment operations such as copying a database, files or deploying a release. I wanted to trim down the size of the table field_revision_field_theplatform_transcript so I added the following statements to the post-db-copy hook for the development environment:

# Truncate long strings for field_theplatform_transcript.

drush6 @$site.$target_env -v sql-query \

'update field_data_field_theplatform_transcript

set field_theplatform_transcript_value =

substring(field_theplatform_transcript_value, 0, 200)'

drush6 @$site.$target_env -v sql-query \

'update field_revision_field_theplatform_transcript

set field_theplatform_transcript_value =

substring(field_theplatform_transcript_value, 0, 200)'

The above queries trim a field of a couple tables which contains very long strings of plain text. Those steps consistently reduce the database size by 1GB. Here is a sample output when importing the development database into my local environment after this change was made:

juampy@juampy-box: $ time drush -y sql-sync @msnbc.dev @self --create-db

You will destroy data in msnbc and replace with data from someserver/msnbcdev.

Do you really want to continue? (y/n): y

Command dispatch completed. [ok]

real 105m32.877s

That reduced the total import time to an hour and forty five minutes. It was a step forward, but we still had more work to do. The next thing to try was slimming down revision data.

Cutting down revisions

Some nodes in MSNBC’s database had hundreds of revisions. developers and editors don’t need all of them but content is gold, so we can’t just go wipe them out. However, If we could cut down the number of revisions in development, then the database size would go down considerably.

I looked for modules at Drupal.org that could help me to accomplish this task and found Node Revision Delete. It certainly looked promising, but I realized that I had to put a bit of work into it so it could delete a large amount of revisions in one go. I added a Drush command to Node Revision Delete which used Batch API so it could run over a long period of time deleting old revisions. When I tested the command locally to keep just the last 10 revisions of articles, it ran for hours. The problem is that the function node_revision_delete() triggers several expensive hooks, and slows the process down quite a bit.

This made me to look at the production database. Did we need that many revisions? I asked the editorial team at MSNBC and we got confirmation that we could stop revisioning some content types. This was great news, as it would slow the database's future growth as well. I went one step further and configured Node Revision Delete so it would delete old revisions of content on every cron run. Unfortunately, our testing missed a bug in Field Collection module: deleting a revision would delete an entire field collection item. This was one of the most stressful bugs I have ever dealt with: it showed up on production and was deleting fresh content every minute. Lesson learned: be careful with any logic that deletes content!

Because of the concerns about lost content, and the fact that Node Revision Delete was still slow to do its work, we uninstalled the module and restored the deleted data. Reducing the number of revisioned content types would slow its growth, but we wouldn't try to remove historical revisions for for now.

Deleting entire nodes from the development database

Our next idea was deleting old nodes on a development environment, and sharing that slimmed down database with other developers. After testing, I found out that I could delete articles and videos published before 2013 while still leaving plenty of content for testing. To test it, I wrote a Drush script that picked a list of nids and used Batch API to delete them. Unfortunately, this was still too slow to help much. Each call to node_delete() took around 3 seconds. With hundreds of thousands of nodes this was not a valid option either.

At this point, I was out of ideas. Throughout this effort, I had been sharing my progress with other developers at Lullabot through Yammer. Some folks like Andrew Berry and Mateu Aguiló suggested I take a look at MySQL parallel, a set of scripts that break up a database into a set of SQL files (one per table) and import them in parallel using the GNU Parallel project. Several Lullabot developers were using it for the NBC.com project, which also had a database in the 5-6 GB range, and it looked promising.

Importing tables via parallel processing

Mateu showed me how they were using this tool at NBC TV, and it gave me an idea: I could write a wrapper for these scripts in Drush, allowing the other members of the development team to use it without as much setup. Coincidentally, that week Dave Reid shared one of his latest creations on one of Lullabot's internal Show & Tell talks: Concurrent Queue. While examining that project's code, I discovered that deep down in its guts, Drush has a mechanism to run concurrent processes in parallel. Eureka! I now had a feasible plan: a new Drush command that would either use GNU Parallel to import MySQL tables, or fall back to Drush’s drush_invoke_concurrent(), to speed up the import process.

The result of that work was SyncDb, a Drupal project containing two commands:

drush dumpdbextracts a database into separate SQL files; one per table. Structure tables would be exported into a single file called structure.sql.drush syncdbdownloads SQL files and imports them in parallel. It detects if GNU Parallel is available to import these tables using as much CPU as possible. If GNU Parallel is not available, it falls back todrush_invoke_concurrent(), which spins up a sub-process per table import.

Here is a sample output when running drush syncdb:

juampy@juampy-box: $ time drush syncdb @msnbc.dev

You will destroy data in msnbc and replace with data from someserver/msnbcdev.

Do you really want to continue? (y/n): y

Command dispatch completed. [ok]

real 13m10.877s

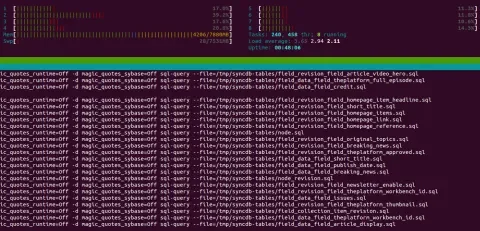

13 minutes! I could not believe it. I asked a few folks at MSNBC to test it and the time averaged from 12 to 20 minutes. This was a big relief: although trimming content helped, making better use of CPU resources was the big breakthrough. Here is a screenshot of my laptop while running the job. Note that all CPUs are working, and there are 8 concurrent threads working on the import:

Next steps and conclusion

There is still a chance to optimize the process even more. In the future, I'll be looking into several potential improvements:

- My friend Pedro González suggested me to look at Drush SQL Sync Pipe, which could speed up downloading the database dump.

- GNU Parallel has many options to make an even better use of your CPU resources. Andrew Berry told me that we could try using the xargs command, which supports parallel processing as well and is available by default in all *nix systems.

- Get back to the root of the problem and see if we can reduce production’s or development’s database size.

- Upgrade to Drush 7 in the server. Drush 7 removed the option

--skip-add-lockswhen dumping a database, which speeds up imports considerably.

Does importing the database take a long time in your project? Then have a look at the approaches I covered in this article. Optimizing these time consuming development steps can vary widely from project to project, so I am sure there are many more ways to solve the problem. I look forward for your feedback!