In my previous article about the serializer component, I touched on the basic concepts involved when serializing an object. To summarize, serialization is the combination of encoding and normalization. Normalizers simplify complex objects, like User or ComplexDataDefinition. Denormalizers perform the reverse operation. Using a structured array of data, they generate complex objects like the ones listed above.

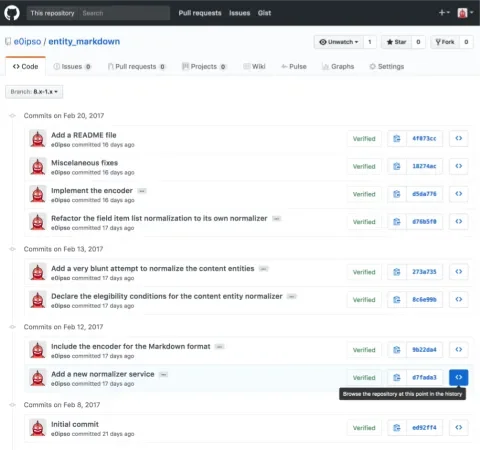

In this article, I will focus on the Drupal integration of the Symfony serializer component. For this, I will guide you step-by-step through a module I created as an example. You can find the module at https://github.com/e0ipso/entity_markdown. I have created a different commit for each step of the process, and this article includes a link to the code in each step at the beginning of each section. However, you can use GitHub UI to browse the code at any time and see the diff.

When this module is finished, you will be able to transform any content entity into a Markdown representation of it. Rendering a content entity with Markdown might be useful if you wanted to send an email summary of a node, for instance, but the real motivation is to show how serialization can be important outside the context of web services.

Add a new normalizer service

These are the changes for this step. You can browse the state of the code at this step in here.

Symfony’s serializer component begins with a list of normalizer classes. Whenever an object needs to be normalized or serialized the serializer will loop through the available normalizers to find one that declares support for the type of object at hand, in our case a content entity. If you want to add a class to the list of eligible normalizers you need to create a new tagged service.

A tagged service is just a regular class definition that comes with an entry in the mymodule.services.yml so the service container can find it and instantiate it whenever appropriate. For a service to be a tagged as a service you need to add a tags property with a name. You can also add a priority integer to convey precedence with respect to services tagged with the same name. For a normalizer to be recognized by the serialization module, you need to add the tag name normalizer.

When Drupal core compiles the service container, our newly created tagged service will be added to the serializer list in what it’s called a compiler pass. This is the place in Drupal core where that happens. The service container is then cached for performance reasons. That is why you need to clear your caches when you add a new normalizer.

Our normalizer is an empty class at the moment. We will fix that in a moment. First, we need to turn our attention to another collection of services that need to be added to the serializer, the encoders.

Include the encoder for the Markdown format

These are the changes for this step. You can browse the state of the code at this step in here.

Similarly to a normalizer, the encoder is also added to the serialization system via a tagged service. It is crucial that this service implements EncoderInterface. Note that at this stage, the encoder does not contain its most important method encode(). However, you can see that it contains supportsEncoding(). When the serializer component needs to encode an structured array, it will test all the encoders available (those tagged services) by executing supportsEncoding() and passing the format specified by the user. In our case, if the user specifies the 'markdown' format. Then, our encoder will be used to transform the structured array into a string, because supportsEncoding() will return TRUE. To do the actual encoding it will use the encode() method. We will write that method later. First, let me describe the normalization process.

Normalize content entities

The normalization will differ each time. It depends on the format you want to turn your objects into, and it depends on the type of objects you want to transform. In our example, we want to turn a content entity into a Markdown document.

For that to happen, the serializer will need to be able to:

- Know when to use our normalizer class.

- Normalize the content entity.

- Normalize any field in the content entity.

- Normalize all the properties in every field.

Discover our custom normalizer

These are the changes for this step. You can browse the state of the code at this step in here.

For a normalizer to be considered a good fit for a given object it needs to meet two conditions:

- Implement the

NormalizerInterface. - Return

TRUEwhen callingsupportsNormalization()with the object to normalize and the format to normalize to.

The process is nearly the same as the one we used to determine which encoder to use. The main difference is that we also pass the object to normalize to the supportsNormalization() method. That is a critical part since it is very common to have multiple normalizers for the same format, depending on the type of object that needs to be normalized. A Node object will have different code that turns it into an structured array when compared to an HttpException. We take that into account in our example by checking if the object being normalized is an instance of a ContentEntityInterface.

Normalize the content entity

These are the changes for this step. You can browse the state of the code at this step in here.

This step contains a first attempt to normalize the content entity that gets passed as an argument to the normalize() method into our normalizer.

Imagine that our requirements are that the resulting markdown document needs to include an introductory section with the entity label, entity type, bundle and language. After that, we need a list with all the field names and the values of their properties. For example, the body field of a node will result in the name field_body and the values for format, summary and value. In addition to that any field can be single or multivalue, so we will take that into consideration.

To fulfill these requirements, I've written a bunch of code that deals with the specific use case of normalizing a content entity into an structured array ready to be encoded into Markdown. I don’t think that the specific code is relevant to explain how normalization works, but I've added code comments to help you follow my logic.

You may have spotted the presence of a helper method called normalizeFieldItemValue() and a comment that says Now transform the field into a string version of it. Those two are big red flags suggesting that our normalizer is doing more than it should, and that it’s implicitly normalizing objects that are not of type ContentEntityInterface but of type FieldItemListInterface and FieldItemInterface. In the next section we will refactor the code in ContentEntityNormalizer to defer that implicit normalization to the serializer.

Recursive normalization

These are the changes for this step. You can browse the state of the code at this step in here.

When the serializer is initialized with the list of normalizers, for each one it checks if they implement SerializerAwareInterface. For the ones that do, the serializer adds a reference to itself into them. That way you can serialize/normalize nested objects during the normalization process. You can see how our ContentEntityNormalizer extends from SerializerAwareNormalizer, which implements the aforementioned interface. The practical impact of that is that we can use $this->serializer->normalize() from within our ContentEntityNormalizer. We will use that to normalize all the field lists in the entity and the field items inside of those.

First turn your focus to the new version of the ContentEntityNormalizer. You can see how the normalizer is divided into parts that are specific to the entity, like the label, the entity type, the bundle, and the language. The normalization for each field item list is now done in a single line: $this->serializer->normalize($field_item_list, $format, $context);. We have reduced the LOC to almost half, and the cyclomatic complexity of the class even further. This has a great impact on the maintainability of the code.

All this code has now been moved to two different normalizers:

FieldItemListNormalizercontains the code that deals with normalizing single and multivalue fields. It uses the serializer to normalize each individual field item.FieldItemNormalizercontains the code that normalizes the individual field items values and their properties/columns.

You can see that for the serializer to be able to recognize our new FieldListItemNormalizer and FieldItemNormalizer objects we need to add them to the service container, just like we did for the ContentEntityIterface normalizer.

A very nice side effect of this refactor, in addition to the maintainability improvement, is that a third party module can build upon our code more easily. Imagine that this third party module wants to make all field labels bold. Before the refactor they would need to introduce a normalizer for content entities—and play with the service priority so it gets selected before ours. That normalizer would contain a big copy and paste of a big blob of code in order to be able to make the desired tweaks. After the refactor, our third party would only need to have a normalizer for the field item list (which outputs the field label) with more priority than ours. That is a great win for extensibility.

Implement the encoder

As we said above the most important part of the encoder is encapsulated in the encode() method. That is the method in charge of turning the structured array from the normalization process into a string. In our particular case we treat each entry of the normalized array as a line in the output, then we append any suffix or prefix that may apply.

Further development

At this point the Entity Markdown module is ready to take any entity and turn it into a Markdown document. The only question is how to execute the serializer. If you want to execute it programmatically you only need do:

\Drupal::service(‘serializer’)->serialize(Node::load(1), ‘markdown’);However there are other options. You could declare a REST format like the HAL module so you can make an HTTP request to http://example.org/node/1?_format=markdown and get a Markdown representation of the node in response (after configuring the corresponding REST settings).

Conclusion

The serialization system is a powerful tool that allows you to reform an object to suit your needs. The key concepts that you need to understand when creating a custom serialization are:

- Tagged services for discovery

- How a normalizer and an encoder get chosen for the task

- How recursive serialization can improve maintainability and limit complexity.

Once you are aware and familiar with the serializer component you will start noticing use cases for it. Instead of using hard-coded solutions with poor extensibility and maintainability, start leveraging the serialization system.